Getting content cited in ChatGPT but missing from Gemini or Perplexity AI can feel inconsistent, but it’s not random. Each system uses a different approach to finding, ranking, and selecting sources, which directly impacts whether your content gets referenced.

Because Gemini vs ChatGPT vs Perplexity citations are built on different retrieval and ranking logic, the same page can appear in one system and be completely ignored in another.

In this blog, we break down how these citation systems work, why these gaps happen, and what actually decides whether your content gets picked or ignored across major AI platforms.

Read: What Are AI Citations? How to Find Which Pages ChatGPT and Perplexity Cite

Average AI Citations by Model

| AI System | Average Sources per Answer | Range / Distribution | Key Characteristics |

|---|---|---|---|

| Perplexity AI | ~8.2 sources | 5–12 sources (≈81% of responses) | Highly citation-dense; strongly retrieval-driven and transparent |

| ChatGPT (GPT-4o / o1) | ~3.2 sources | 1–6 sources depending on browsing mode | Selective citations; often references Wikipedia (~5%) and Reddit (~3%) |

| Gemini (AI Overviews / Gemini 3) | ~13.3 sources (AI Overviews) | Varies widely across surfaces | Increased citation volume in AI Overviews; standalone Gemini results are less consistent |

How Gemini generates Citations

Gemini can generate citations through search-grounded retrieval connected to Google Search or related grounding systems.

Process

- Query decomposition: Breaks the user input into smaller search queries.

- Real-time retrieval: Fetches relevant web results or grounded sources.

- Source ranking: Prioritises relevant and authoritative content.

- Answer synthesis: Combines information from multiple sources.

- Citation embedding: Adds references within or alongside the response.

Citation Behavior

Gemini citations are often embedded within the response rather than placed in a separate reference list. Depending on the product surface and mode, citations may appear as inline links, grouped references, or a cited sources section.

Citations are generally more visible when the response closely follows source material or when grounding is enabled. However, not every statement will necessarily be linked to a specific source.

Why Gemini Is Not Referencing My Content

- Low authority: Few backlinks or weak trust indicators

- Poor structure: Content that lacks clear organisation or headings

- Indexing issues: Page not properly indexed or not easily crawlable

- Technical limitations: Slow load times, missing metadata, or poor mobile support

Gemini Favours Schema + Reference-Style Content

Gemini prioritises structured and machine-readable content, which directly shapes Gemini citations.

Content with schema markup, clear hierarchy, and factual organisation is easier to interpret and rank. Selection is influenced by authority signals, topical relevance, and freshness.

Pages formatted like reference resources (definitions, structured sections, clear headings) are more likely to be cited.

Gemini citations = structured input → higher citation probability

Key Insight

Gemini citations tend to emphasise freshness and grounded search results, but they may provide less granular claim-to-source mapping than citation-first systems.

Read: AI Citation Analysis: Boosting Search Visibility with Data

How ChatGPT Citations Work

ChatGPT can generate answers in two main ways: either from its trained internal knowledge or, when enabled, by using external retrieval tools like browsing or search. If it relies only on internal knowledge, it does not include citations or links, since no external sources are being used.

Process

- Initial generation: Generate an initial response using model knowledge

- Conditional retrieval: Conditionally retrieve external sources when browsing/search tools are enabled

- Source selection: Select relevant information from retrieved sources

- Answer refinement: Refine and integrate the information into the final response

- Citation formatting: Add citations only where specific claims are supported by external sources

Citation Behavior

Citations are context-dependent: they appear mainly when external sources are used, especially for recent events, factual verification, or when the user requests references. They are shown as links or reference markers tied to supporting claims, not as a full list for every answer.

ChatGPT Favours Structured Lists + Comparisons

ChatGPT prioritises output structure and readability, influencing how ChatGPT citations are presented.

The system often reformats information into lists, steps, or comparisons, even if the original source is unstructured. This improves clarity and usability.

Content that is already modular, segmented, and well-organised is more likely to be accurately reflected in responses.

ChatGPT citations = structured content → better output alignment

Are ChatGPT Citations Reliable?

ChatGPT citations are generally reliable when they are generated in browsing or retrieval-enabled modes and backed by clear, verifiable sources.

In such cases, ChatGPT citations can support factual explanations, summaries, and research-based answers, especially when the referenced material is directly accessible and closely aligned with the response content.

However, there are some limitations. ChatGPT citations are not always shown, since certain responses rely only on model knowledge without live retrieval.

Even when sources are provided, the content may be condensed or rephrased, which can slightly change the original meaning.

In addition, only selected references are included, meaning some relevant sources may not appear.

Key Insight

No retrieval means no citations, and even with retrieval, citations only appear selectively where external evidence is actually used.

Read: 11 Best AI Citation Tracking Tools for B2B Marketing Teams (UPDATED 2026)

Summary: Gemini vs ChatGPT Citations

| Feature | Gemini | ChatGPT |

|---|---|---|

| How sources are shown | Built into the response with inline links, sometimes subtle | Presented as organized links, either inline or in groups |

| Citation visibility | Moderate (available but not always clearly emphasized) | Variable (depends on context or user input) |

| Citation trigger | Automatically included through search integration | Added when needed (browsing, real-time queries, or request) |

| Citation style | Embedded and search-oriented | Structured and explanation-driven |

| Claim-to-source mapping | Partial (not every claim is linked) | Selective (focus on key points) |

| Data source | Google Search index (real-time) | Model knowledge with optional live retrieval |

| Key differences | Strong real-time grounding, less explicit attribution | Clear formatting, but citations may not always appear |

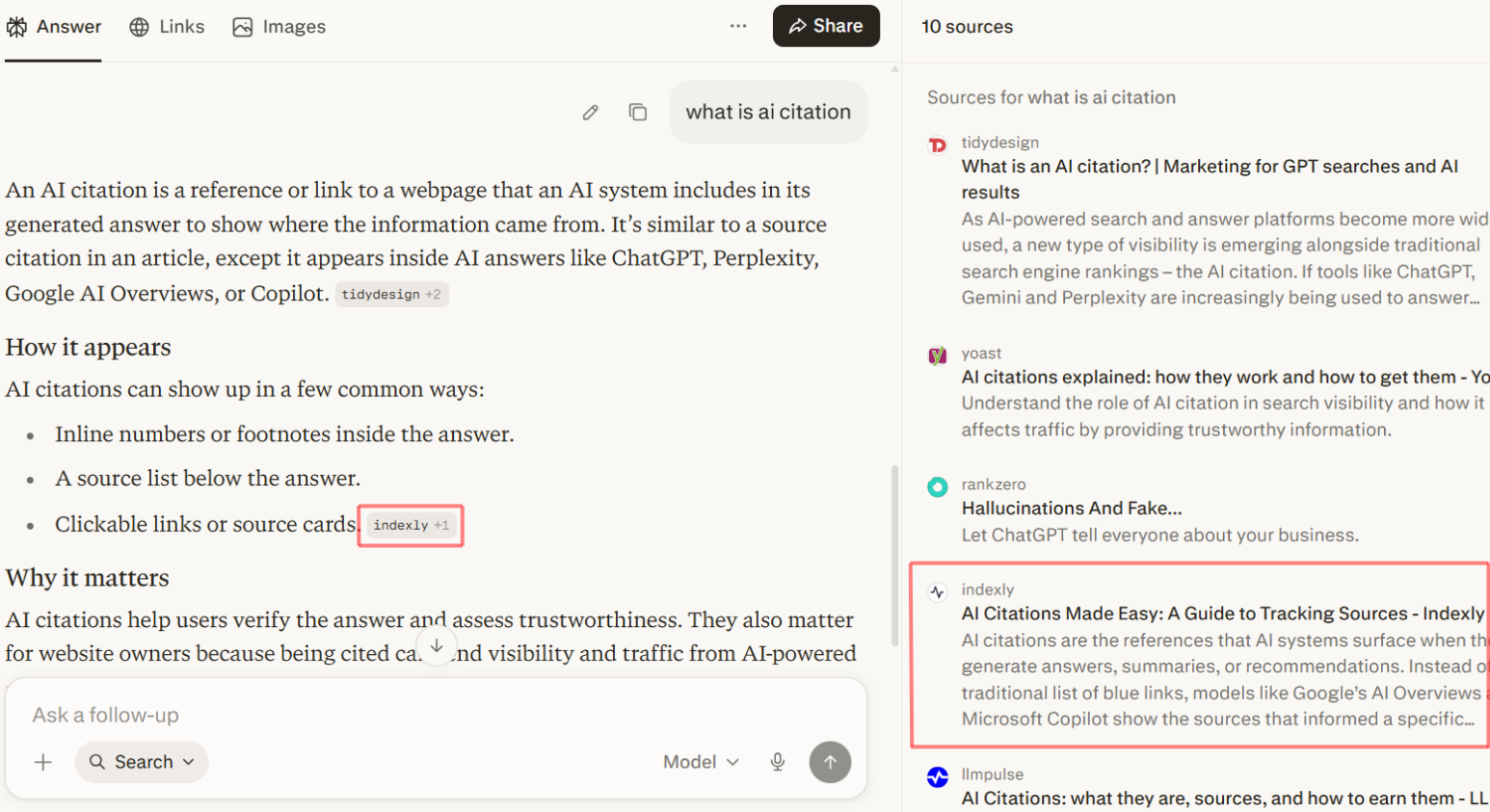

How Perplexity Citations Are Generated

Perplexity uses a retrieval-first approach in which sources are central to answer generation.

Process

- Query parsing: Interprets the user’s intent.

- Multi-source retrieval: Collects information from multiple sources.

- Ranking: Filters for relevance and quality.

- Evidence-based synthesis: Builds an answer from retrieved material.

- Inline citation mapping: Attaches sources to supporting statements.

Citation Behavior

Perplexity usually shows citations by default and uses dense inline references to connect claims with sources. This makes its answers highly traceable, though not every sentence will map perfectly to a single source.

Perplexity Cites Less but More Selectively

Perplexity AI uses a selective citation model, where Perplexity citations prioritise a small number of highly relevant sources.

The system retrieves multiple documents, applies relevance and credibility ranking, and filters out weaker or redundant sources before generating the final answer. As a result, only the highest-confidence sources are included.

This approach produces concise, evidence-backed responses that are easy to verify. However, fewer citations can reduce source diversity and alternative viewpoints.

Perplexity citations = high precision, low volume, strong traceability

Key Insight

Perplexity places strong emphasis on transparency and auditability, making it the most citation-forward of the three in its core experience.

Summary: ChatGPT vs Perplexity Citations

| Feature | ChatGPT | Perplexity AI |

|---|---|---|

| Explanation-first vs citation-first | Prioritises explanation and clarity | Prioritises citations and source evidence |

| How sources are shown | Presented as structured links, inline or grouped | Displayed as numbered inline references tied to claims |

| Citation presence | Not always included | Consistently included |

| Citation density | Low to moderate | High (many references per answer) |

| Transparency differences | Moderate level of transparency | High transparency with clear traceability |

| Claim-to-source mapping | Selective (main points supported) | Extensive (most claims directly linked) |

| Answer dependency on sources | Can generate answers without sources | Fully reliant on retrieved sources |

| Key differences | Focuses on readability and structured explanations | Focuses on verification and source-backed answers |

Which AI gives Best Citations?

| AI Tool | Citation Strength | Best Use Case |

|---|---|---|

| Gemini | Strong real-time grounding with trusted sources | Current and up-to-date web information |

| ChatGPT | Moderate and context-dependent | Easy-to-understand explanations and learning |

| Perplexity | Highest level of transparency with visible sources | Research, validation, and fact-checking |

Conclusion

AI chatbots differ in how they handle citations based on their retrieval and response design. Gemini is best suited for real-time, search-based grounding, making it useful for fresh web information, though its attribution is not always fully detailed.

ChatGPT prioritizes clear, structured explanations and includes citations only in certain contexts, offering flexibility but less consistent source visibility.

Meanwhile, Perplexity AI focuses on transparency, consistently linking responses to multiple sources, making it the most citation-heavy and research-oriented option.

In summary, the best choice depends on the need: up-to-date results (Gemini), clear explanations (ChatGPT), or highly traceable sources (Perplexity).

FAQs

1. Why is ChatGPT not giving me sources or citations?

ChatGPT only shows citations when browsing or retrieval is enabled. In many cases, it generates answers from trained knowledge without linking sources unless explicitly required.

2. Why does Gemini show some links but not for every answer?

Gemini uses search grounding, but not every sentence is linked to a source. It usually shows citations only when content is directly pulled or closely matched from search results.

3. Why does Perplexity show more citations than ChatGPT or Gemini?

Perplexity AI is built to always retrieve and attach sources. It prioritizes traceability, so almost every claim is backed by a reference.

4. Can I trust AI-generated citations completely?

Not fully. AI citations are helpful for guidance, but users should still open and verify the original sources, especially for important or sensitive information.

5. Why is my website not showing up in AI citations like Gemini?

Your content may not appear in Gemini citations due to low authority, weak SEO structure, indexing issues, or lack of structured data (schema markup).

6. Which AI is best for accurate citations?

- Gemini → best for live web information

- ChatGPT → best for explanations with optional sources

- Perplexity → best for research with strong source tracing

7. Do AI citations always match the exact source text?

No. Most systems summarize or rephrase content. Only Perplexity tends to stay closer to direct source mapping, while others prioritize readability.